The concept of “artificial intelligence” seems to be everywhere. The market research industry is no different. Every day, it seems like we inch closer to the dystopia that The Twilight Zone warned us about decades ago: The rise of the machines!

But the robots aren’t orchestrating an uprising the way sci-fi envisioned. It’s just that they are increasingly—and in some ways, quietly—replacing the tasks we humans have become accustomed to taking on ourselves.

It’s not all bad, in fact. There are real cost-savings in automating tasks and developing AI to streamline processes that used to be tedious and cost-intensive. Modern AI is making a lot of our lives easier, and more productive.

But there are things that machines do well, and things that they don’t. And until machines can understand nuance, context and emotion, we need to be realistic about what can be truly outsourced to the automatons, and what needs to be kept in-house with the flesh-and-blood.

Big data, deep data, wide data

Market research is not a big-data environment. “Big data,” properly understood, is data that runs wide, but shallow. That is, the data might be representative of a very large data set, but the data tends to be limited in depth. A “big” database might know a little bit about about a whole bunch of Honda Accord owners, for example. It might know purchase price, dealer location, household income, and purchase price. But it doesn’t know a great deal about a single, given Honda Accord owner. Why did he or she purchase the vehicle? What mattered to him or her most when they were shopping for a new car? What sensibilities was he or she factoring in to the purchase, beyond list price and features?

Market research, on the other hand, strives to understand a lot about a smaller (but statistically representative) sampling of individuals. Market researchers want to know a whole lot about why certain Honda Accord owners purchased that automobile over its competitors, what might motivate them to buy another, what could move them to consider alternatives, and what emotional considerations the consumer experienced throughout the customer journey.

So if you consider a very basic AI function, say, sorting all Honda Accord owners by zip code, machines can do that instantaneously. You would never, in the modern era, assign that task to a person. But if you consider a market research question, such as determining the main emotional driver that makes a mother of three prefer an SUV to an economy sedan, a machine will be incapable of drawing those conclusions in a reliable manner that’s actionable or repeatable.

Score one for the human race.

Where does AI hand it back to humans?

Advancements are being made everyday to make computers understand and interpret inputs as humans would have them do so. And while we are light years ahead of where we were just 30 years ago, market researchers need to take caution in getting caught up in the novelty and technological whiz-bang. For if we leave data analysis to entities that truly can’t reason or experience emotion, we are potentially asking for the proverbial “garbage in.”

Metaphorical robots are doing an admittedly remarkable job collecting the data. From logic programming to decision trees to chat bots that can practically “think on their feet,” the task of collecting respondent data is becoming more and more reliable when left to AI technology. The problem often arises when those tools are charged with understanding and interpreting the information they are gathering.

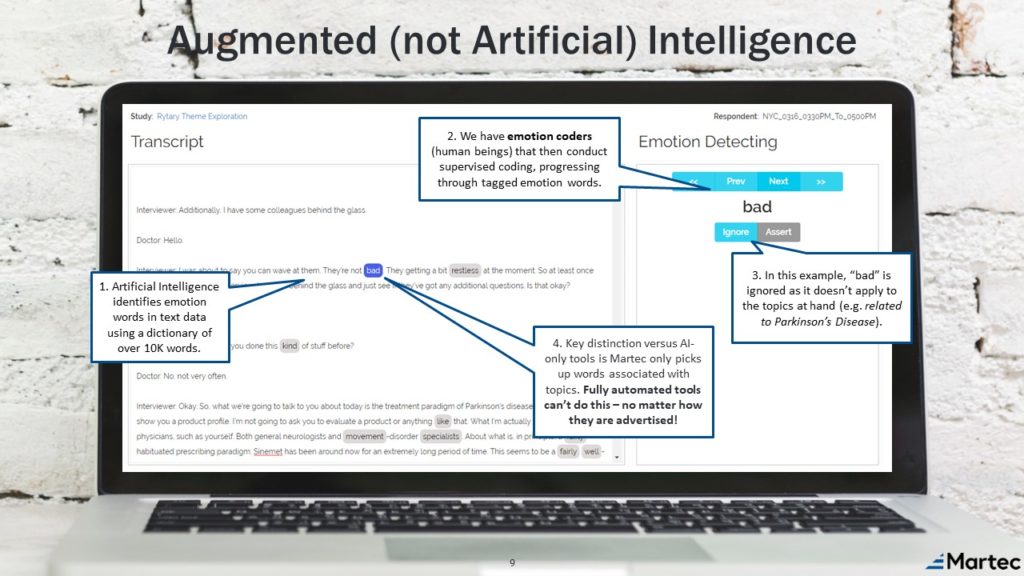

We recently encountered an example in which AI software was attempting to perform sentiment analysis on a real-time conversation, much in the same way software is deployed to mine sentiment analysis of conversations that happen online and on social media. Text is interpreted and values are placed on the text as programmed by algorithms. But in this case, a false-negative was injected into the sample as a result of the misinterpretation of a word choice!

As you can see, AI detected the occurrence of the word “bad” and immediately assigned a negative connotation to its use. What it didn’t understand was context. In this case, this was something that no human would have misinterpreted, but a machine potentially injected a fatal flaw into the data set. For one, it missed the critical qualifier “not” before the word “bad,” as the respondent was indicating that a particular input was “not bad,” so the AI got the nuance entirely backward. Furthermore, the text that was being interpreted was actually something being expressed to a noise that was entirely external to the market research…a noise outside the room! It had nothing to do with the study being conducted; it was actually a positive sentiment being expressed; and yet, the artificial intelligence was perfectly willing and ready to return the negative sentiment as a legitimate input into the data set.

If AI were solely relied upon here, you can see where the market researcher would’ve gotten it wrong. Instead, a human should be providing oversight over the analysis, if not conducting it outright, so that heartless machines don’t blindly go about getting it wrong without so much as a second guess.

Don’t set your research on auto-pilot

If you know any airline pilots, they will tell you that they are paid to take off and to land…most of what happens in between is done by computers. Perhaps the same paradigm can be applied to AI’s role in market research.

Let’s face it, machines can do a lot of things nowadays, but even Alan Turing tests will prove their limitations. They will miss context. They will fail to pick up on non-verbal cues or tone of voice. They will misinterpret text. But when it comes to the most critical parts of the market research process — constructing a survey to understand human emotions and analyzing the human emotions that the survey results return — they’re just not there yet. The emotions and the nuance should be built into the screen and the survey design, then later applied to vetting the data as recorded…even if machines are being entrusted to collect the data. Humans doing the critical components of takeoff and landing, computers doing the drudgery.

So yes, let’s leverage modern technology to take a 10-step process and make it a 5-step process. We’ll all save time, money and tedium in the process. But let’s not fire humans from doing what we still do best…and that’s understand other humans.

We call this concept “augmented intelligence:” leveraging artificial intelligence and applying human understanding and emotion analysis to optimize market research outcomes. And we invite you to pick our human brains on you can apply it to your next market research project.

Related reading: Artificial Intelligence Will Be a Disruptive Force In Market Research